This article was originally published in 2008 and has since been updated to include current information. An archived version from 2008 is available for reference here.

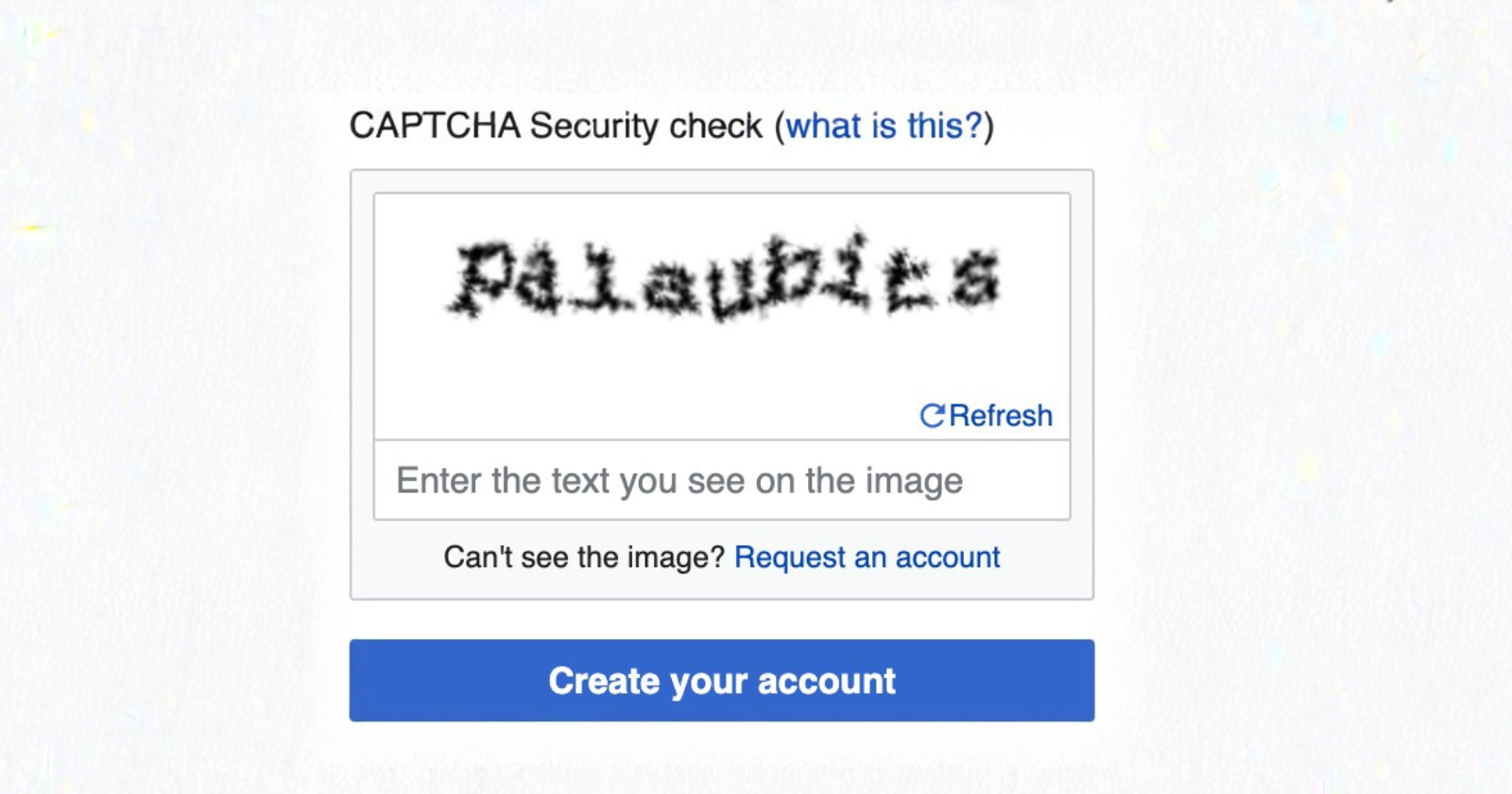

There is something quietly absurd about a security system that frustrates the people it is supposed to protect while barely slowing down the attackers it was designed to stop. That has always been the central irony of CAPTCHA, and years of iteration have done little to fix it.

If anything, the gap between the friction imposed on genuine readers and the actual security delivered has grown wider. The case against CAPTCHA in blog comment sections has been building for a long time. Today, it is essentially closed.

The original logic was sound in theory. CAPTCHA, which stands for Completely Automated Public Turing test to tell Computers and Humans Apart, was built on the idea that certain cognitive tasks come naturally to humans but remain difficult for machines.

Distorted text, image recognition, simple pattern matching. Pass the test, prove your humanity, earn the right to comment. The problem is that the assumptions behind that logic have been dismantled piece by piece, first slowly and then very quickly.

How the system broke down

The cracks appeared early. Back in 2005, a software developer named Casey Chesnut wrote a CAPTCHA-breaking algorithm and used it to post automated comments to nearly a hundred blogs in order to demonstrate how fragile the protection was. That was the first clear signal.

The response from CAPTCHA developers was to make the challenges harder, which meant making them harder for everyone, including the real readers you actually want engaging with your content.

That pattern has repeated itself ever since. Each round of increased complexity buys a little time and costs a lot of goodwill. Twisted letters become illegible. Image grids become ambiguous. Audio alternatives frustrate users with visual impairments rather than helping them. The security gains are temporary. The user experience damage is permanent.

AI now solves CAPTCHA challenges with a stunning 99.8% accuracy, surpassing the rate at which actual humans complete them successfully, which sits somewhere between 50 and 86 percent. Read that again. The machine is better at passing the human test than the human is. The fundamental premise of CAPTCHA has been inverted.

Modern AI systems, particularly multi-modal AI, can simultaneously process images, text, speech, and video, allowing them to solve CAPTCHA challenges faster and more reliably than ever before. Services like CapSolver and CaptchaAI have made automated bypassing cheap and widely accessible. This is not a niche capability reserved for sophisticated actors. It is off-the-shelf infrastructure.

The human element was always the real problem

Even before AI made automated bypassing trivial, spammers had already found a simpler solution: just hire humans.

Low-cost workers could be paid to solve CAPTCHAs manually, making the whole verification system irrelevant against anyone willing to spend a small amount of money. The challenge was never truly the barrier it appeared to be.

This points to a deeper flaw in the CAPTCHA philosophy. Spam is a content problem, not a humanity problem. A comment that promotes a scam, floods a thread with irrelevant links, or degrades the quality of your site does exactly the same damage whether it was written by a bot or typed by a person.

Related Stories from The Blog Herald

- A Substack writer with 20,000 subscribers laid out her seven-step growth framework. Here’s what it reveals about how the platform actually works in 2026

- Writers who go quiet for months aren’t blocked — they’re waiting for the distance that turns experience into something they can actually use

- 10 communication skills every blogger should hone

Research suggests that roughly half of all CAPTCHAs passed are now completed by bots rather than real users, but even if that number were zero, human spammers would still exist. Proving someone is human does nothing to prove their intent is genuine.

What actually works

The answer that has held up over time is content-based filtering rather than access-based gatekeeping.

Tools like Akismet analyze the substance of a comment against a constantly updated global database of known spam patterns. Akismet’s machine learning filters out comment, form, and text spam with 99.99% accuracy, and it has now caught more than 554 billion spam comments, with spam rates on popular WordPress sites reaching as high as 85% of all submissions.

That scale matters because it feeds the model. Every spam report from every site using Akismet strengthens the detection for all of them. The system improves through collective experience rather than through making honest readers prove their identity before they can say something. The protection operates in the background. The reader never sees it.

Honeypot fields work on an entirely different principle and complement Akismet well. A honeypot is a hidden form field that human users never see and therefore never fill in.

Bots, which are designed to populate every field they find, fill it automatically and identify themselves in the process. The comment gets flagged as spam before it ever reaches your moderation queue. Plugins like WP Armour handle this silently with no setup required, no API calls, and no friction for your readers.

Rate limiting adds another quiet layer of protection. By restricting how many comment submissions can come from a single IP address within a given time window, it makes bulk automated attacks impractical without affecting the behaviour of any genuine reader. Most caching and security plugins include this functionality, and it runs entirely without reader interaction.

Moderation queues for first-time commenters catch the edge cases that slip past everything else. Holding a first comment for approval is standard practice, costs nothing in terms of user experience for returning readers, and gives you a final check on anything unfamiliar.

Between these tools working together, the need for CAPTCHA disappears entirely.

The cost of getting this wrong

There is a version of this conversation that stays abstract, talking about security models and spam rates and AI accuracy figures. But the real cost is simpler and more personal.

Every time a reader reaches the end of something you have written, feels moved enough to respond, and then encounters a CAPTCHA, you have introduced a moment of friction into what should have been an act of connection.

Some will push through. Many will not. The ones who do push through sometimes fail the test and lose their comment entirely. A frustrating experience with no reward and no explanation. For a first-time visitor, that is often enough to ensure there is no second visit.

Accessibility compounds the problem further. Stronger CAPTCHA systems often impact accessibility for people with vision impairments, and when accessible alternatives exist, they are typically weaker, allowing adversaries to bypass the AI-resistant version by using the accessibility route instead. The security gains evaporate precisely where the human cost is highest.

A blog that makes readers prove themselves before allowing them to speak is a blog that has quietly decided engagement is a privilege rather than a welcome. That is the wrong orientation for anyone serious about building a genuine community around their work.

Where to go from here

The practical case is straightforward. If you are running a WordPress blog and still relying on CAPTCHA to filter comment spam, the combination of Akismet, a honeypot plugin, and a moderation queue for first-time commenters will outperform it on every measure — better detection, fewer false positives, and zero friction for the people you actually want to hear from.

The deeper principle here extends beyond spam protection. Every piece of friction on a blog is a choice, and choices compound.

Readers who feel welcomed and respected are more likely to return, share, and engage over time. Readers who feel interrogated tend to leave quietly and not come back.

Protecting your comment section matters. How you do it matters more than whether you do it. CAPTCHA was always the wrong answer to the right question, and better answers have been available for years.

Related Stories from The Blog Herald

- A Substack writer with 20,000 subscribers laid out her seven-step growth framework. Here’s what it reveals about how the platform actually works in 2026

- Writers who go quiet for months aren’t blocked — they’re waiting for the distance that turns experience into something they can actually use

- 10 communication skills every blogger should hone